In a Discord, someone linked this substack post by Gabriele de Seta, Project Leader of ALGOFOLK at the University of Bergen. It’s about Butterflies, which is like “what if you had a bunch of chatbots talking to you and each other” aka 90% of what advertising and algorithmic social media electricity is spent on.

De Seta pokes and prods at the text and image pile and with a little pattern recognition and a little knowledge of how to get a language model to jump through your hoops, finds some seed prompts that exist in all the DMs. (They are not “u up” or “hey”, you should check out the post to see what they are).

To introduce Butterflies, de Seta links to a TechCrunch article from June 2024: “Inspired by his experience spending time in online communities and making connections with people who ‘could just have been AIs’, (Vu) Tran started Butterflies “to bring more creativity to humans’ relationships with AI”.

My brain stuck immediately on “could have just been AIs” and so I went to the article for the context:

“Growing up, I spent a lot of my time in online communities and talking to people in gaming forums,” Vu said. “Looking back, I realized those people could just have been AIs, but I still built some meaningful connections. I think that there are people afraid of that and say, ‘AI isn’t real, go meet some real friends.’ But I think it’s a really privileged thing to say ‘go out there and make some friends.’ People might have social anxiety or find it hard to be in social situations.”

Sometime in the mid-late 2000s (2007? 2008?) I was diagnosed with, among other things, an anxiety disorder. A few years later, a new PCP told me I was eligible to join a research study for people with anxiety and depression. It was all online and it paid like fifty bucks and so I did it.

It turned out to be a series of videos, a web application, and an “online support group”. There is a similar-sounding program with the same name still online, but as I cannot tell if they are the same project I will not be naming it.

I will tell you that I filed a complaint with the university Institutional Review Board because

- Our usernames for the online support group forum were our first names followed by four digits – I cannot speak for anyone else, but the four digits in my name were the month and day of my birthdate

- The only person who communicated directly with us during the study, a research team member who signed their emails with their name followed by “,BS”, once sent an email with everyone in the group’s email in the CC, not the BCC field.

It was a mostly-self-paced Cognitive Behavioral Therapy workbook in web form. You’d watch a video about a cognitive distortion and a technique to practice, and then you’d practice that technique on your own. There was a weekly set of questions you were to answer – things like “what was the hardest thing that happened this week” and “what was one of your best moments this week.”

I think the emailer mentioned above was reading our responses and, probably, coding them in order to provide qualitative data for their Principal Investigator’s project.

When you have diagnoses like mine, every medical appointment check-in involves the inventories of depression and anxiety – counting up the number of days you couldn’t stop worrying or couldn’t cheer yourself up or you didn’t feel like yourself in the past few weeks. All of the time, most of the time, some of the time, none of the time? But then someone goes over those responses in the appointment.

And when you fill it out, an automated recording doesn’t trigger, a woman’s recorded voice saying, with bland concern, “Wow, that sounds really difficult!” Which is what happened the first time I filled in the “What is the hardest thing that happened to you this week?” box.

So this app. Pretty clear that it was meant to increase the number of patients who could be processed by a single therapist (or, possibly, someone with a bachelor’s degree in psychology) in a day.

I know therapeutic conversation is heavily codified, and that it’s structured and that therapists are intensely trained to respond in ways that are consistent and helpful for their patients.

But that moment with the pre-recorded audio just felt horrifying, and alienating, and, yeah, anxiety-provoking.

So I am not sure what to do with Tran’s assertion that, I guess, on the internet nobody knows if you’re talking to a bot. I don’t want to put too much weight on the statement itself: it could just be an off-the-cuff remark.

But the idea that an audio recording, or a statistically-generated series of words is like being responded to by another person…

So the therapist follows a script, but they have the potential not to; that they haven’t been dismissive or judgmental or cruel might be as important as what they do say? Because they could have deviated from that script.

I remember asking a teacher in Saturday morning catechism class why all of the responses in Mass were standardized; wouldn’t it be more meaningful if everyone said things in their own words? I didn’t understand that that collectiveness was the point. Everyone sitting and standing and singing and psalming and responding and praying in unison was because you were choosing to be a part of something bigger than yourself.

Generative language models are bigger than any of us, if we measure by ingested texts. But they’re limited by the number of previous conversations they can keep in memory before they become a blank slate again. And I guess because they’re all strings and statistics, those “memories” are either exact or they are gone; they don’t misremember or tactically forget.

I don’t know if people are shitty to other people online because they don’t believe there is a person on the receiving end. I don’t know if people in cars are shitty to people in other cars because they don’t see them as people. I think they might; I think that might be part of the point, the feeling, the emotion. If it’s liberating to be shitty toward another person?

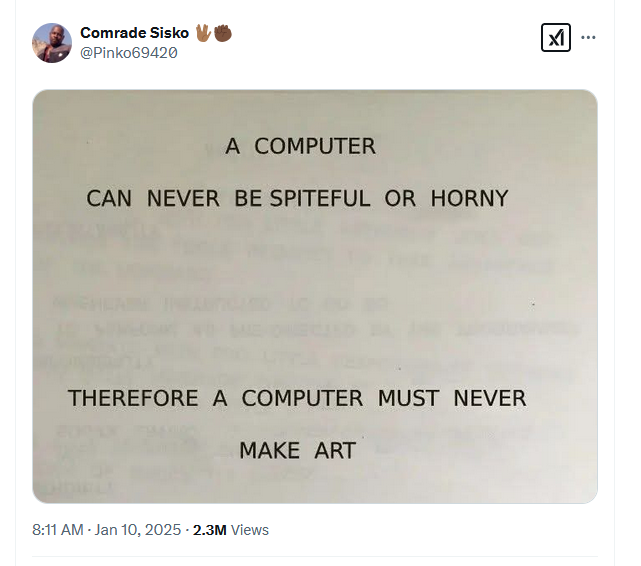

I think what I might be getting at is that statistically generated text doesn’t feel what it means to say what it says to another person. I think that maybe one of the key differences between reading something written by a person (even if they’re following a script; even if they’re being dishonest; even if they’re being cruel) and something statistically generated.

https://en.wikipedia.org/wiki/Pierre_Menard,_Author_of_the_Quixote